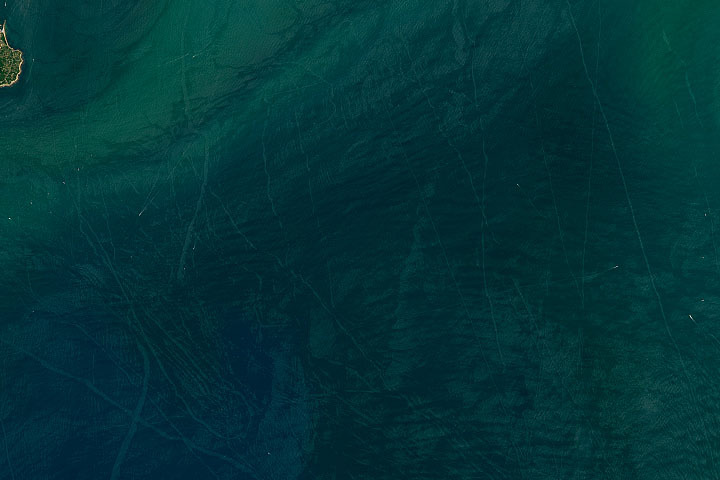

Every month on Earth Matters, we offer a puzzling satellite image. The July 2024 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting.

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

Update on July 23, 2024: This false-color image shows a plume—likely an orographic cloud—streaming from near the summit of Antarctica’s Mount Siple. Colors in this image represent brightness temperature, which is useful for distinguishing the relative warmth (orange and pink) or coolness (purple and blue) of various features. Congratulations to Ivan Kordač for being the first to correctly identify the the image’s polar location. Read more about the area in “Stately Mount Siple.”

Every month on Earth Matters, we offer a puzzling satellite image. The June 2024 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting.

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

Update on June 4, 2024: This image shows greenhouses in eastern China. Congratulations to James Varghese for being the first to correctly identify the feature and its location. Read more about the area in “A Greenhouse Boom in China.”

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

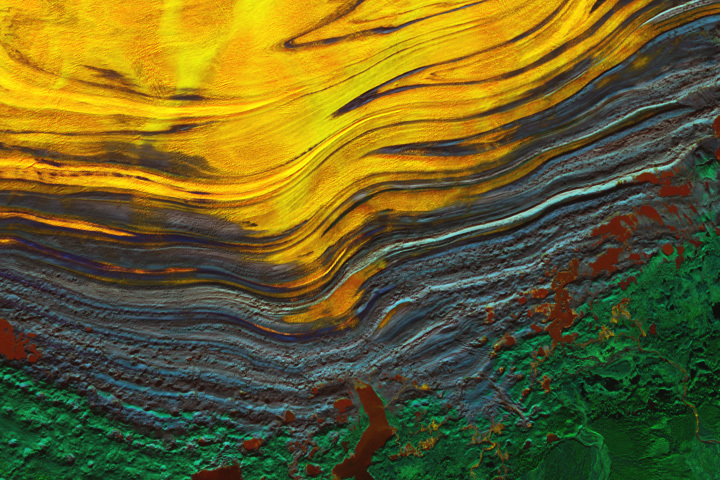

Update on April 23, 2024: This image shows Sortebræ, a large surge-type glacier in eastern Greenland, on September 6, 1986. Congratulations to Steward Redwood for being the first to correctly identify the glacier. Read more about the glacier and see how it has retreated in recent decades in our Image of the Day story.

Every month on Earth Matters, we offer a puzzling satellite image. The March 2024 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting.

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

Update on March 11, 2024: This image shows Spirit Lake, located in south-central Washington, on April 26, 2023. Congratulations to Ivan Kordač for being the first to correctly identify the lake. Special mention goes to David Sherrod, who pointed out the lake’s floating log raft and mentioned a recent debris flow in the region (which occurred several weeks after this image was acquired). Read more about the lake in our Image of the Day story.

Every month on Earth Matters, we offer a puzzling satellite image. The February 2024 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting.

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

Update on January 23, 2023: This image shows Tukpahlearik Creek in northwest Alaska. Congratulations to Luis Martinez for being the first to correctly identify the feature and its location. Special mention to Steve, who provided a reason for the creek’s unusual color. Read more about the image in our Image of the Day story, which describes several hypotheses for the creek’s orange hue.

Every month on Earth Matters, we offer a puzzling satellite image. The January 2024 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting.

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

Update on January 9, 2024: This image shows terrain near the confluence of the Wisconsin and Mississippi rivers. Puzzler images are typically acquired by satellites, but this month we chose to feature a photograph taken by an astronaut on the International Space Station. Congratulations to Pierre-Luc Grenon for being the first to correctly identify both rivers. Read more about the image in our Image of the Day story.

Every month on Earth Matters, we offer a puzzling satellite image. The December 2023 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting.

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

Update on November 27, 2023: This false color image shows the terminus of southeastern Alaska’s Malaspina Glacier, the largest piedmont glacier in the world. Congratulations to Juan Antolin Arnau for being the first to correctly identify the feature and its location. Read more about the image in our Image of the Day story.

Every month on Earth Matters, we offer a puzzling satellite image. The November 2023 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting.

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

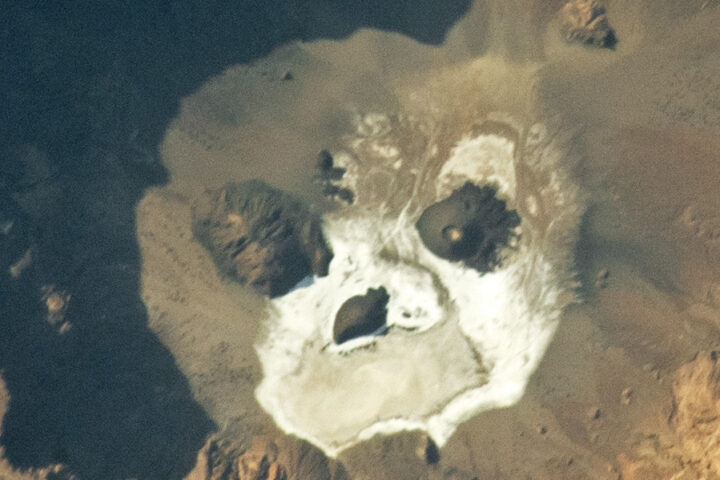

Update on October 31, 2023: This puzzler image shows Trou au Natron, a deep caldera and soda lake in northern Chad. Congratulations to Warren Hansen for being the first to correctly identify the feature and its location. Read more about the image in our Image of the Day story.

Every month on Earth Matters, we offer a puzzling satellite image. The October 2023 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting.

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

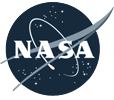

Update on September 26, 2023: This puzzler image shows an ephemeral arc spanning a fjord in Greenland. It may have been caused by a wave from a calving iceberg or an underwater plume of freshwater rising to the surface. Read more about the image in our Image of the Day story.

Every month on Earth Matters, we offer a puzzling satellite image. The September 2023 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting.

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!