Every month on Earth Matters, we offer a puzzling satellite image. The July 2024 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting.

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

Update on July 23, 2024: This false-color image shows a plume—likely an orographic cloud—streaming from near the summit of Antarctica’s Mount Siple. Colors in this image represent brightness temperature, which is useful for distinguishing the relative warmth (orange and pink) or coolness (purple and blue) of various features. Congratulations to Ivan Kordač for being the first to correctly identify the the image’s polar location. Read more about the area in “Stately Mount Siple.”

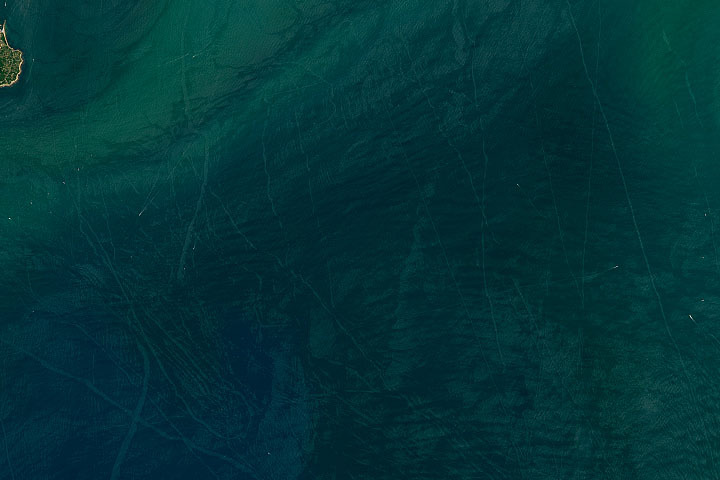

Every month on Earth Matters, we offer a puzzling satellite image. The June 2024 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting.

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

Update on June 4, 2024: This image shows greenhouses in eastern China. Congratulations to James Varghese for being the first to correctly identify the feature and its location. Read more about the area in “A Greenhouse Boom in China.”

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

On May 11, 2024, the day-night band of VIIRS (Visible Infrared Imaging Radiometer Suite) on the Suomi NPP satellite spotted the aurora borealis over the United States during the strongest geomagnetic storm in over two decades. That same night, observers on the ground captured spectacular photographs of the dazzling light. The following photos represent just a handful of those shot by citizen scientists as part of NASA’s Aurorasaurus project, which tracks aurora sightings around the planet.

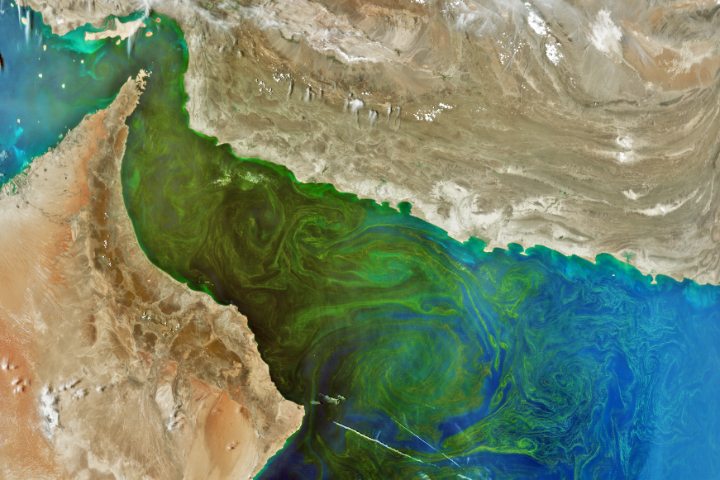

Update on May 21, 2024: This image shows a phytoplankton bloom in the Gulf of Oman. It was acquired on March 17, 2024, less than two months after the launch of NASA’s PACE (Plankton, Aerosol, Cloud, ocean Ecosystem) satellite. Congratulations to Dan Taylor for being the first to correctly identify the bloom and its location. Special mention goes to Robert Taylor for providing a detailed answer, and to Timotheus Fasana, who was the only person to correctly guess the satellite and instrument. Read more about the bloom in our Image of the Day story.

Every month on Earth Matters, we offer a puzzling satellite image. The April 2024 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting. The location of this puzzler might be relatively easy to identify, so bonus points go to those who weigh in on the “what” and “why.”

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

Update on April 23, 2024: This image shows Sortebræ, a large surge-type glacier in eastern Greenland, on September 6, 1986. Congratulations to Steward Redwood for being the first to correctly identify the glacier. Read more about the glacier and see how it has retreated in recent decades in our Image of the Day story.

Every month on Earth Matters, we offer a puzzling satellite image. The March 2024 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting.

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

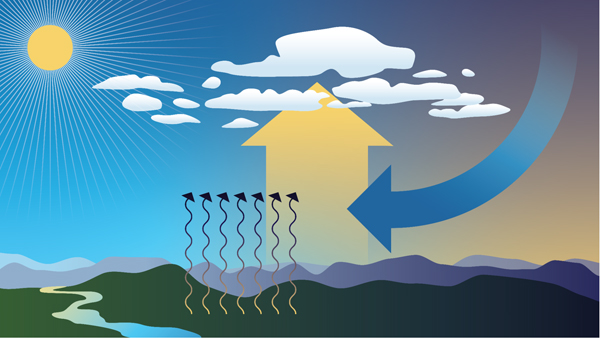

Energy from the Sun warms our planet, and changes in sunlight can also cause changes in temperature, clouds, and wind. Clouds are ever changing and give you clues and information on what is happening in the atmosphere. Clouds can tell you if air is moving vertically (or upward) when you see cumulus type clouds growing in the distance. Clouds can also tell you which direction the wind is blowing when you see clouds move at different levels in the atmosphere. They can even tell you how much moisture or water vapor is available just by the presence of clouds, especially by the presence of high cirrus clouds.

Let’s look at the process by which clouds form.

Clouds form when air cools and water vapor condenses. There are four ways that cause air to rise in our atmosphere, which is necessary to initiate the formation of a cloud.

1) Convection

This process starts when the Sun warms the surface of the Earth. The air right above the surface is warmer than the rest of the air and it starts to rise. If this portion or parcel of air rises enough to cool to the dew point, condensation occurs and a cloud forms. You see this most often on hot summer days when beautiful cumulus towers develop and grow.

Comparison Example: You may have experienced convection when you sat around a campfire, and felt the warmth of the heat coming from right above the fire.

2) Convergence

This process happens when air at the surface comes together or converges and leads to air moving up. These clouds are not puffy cumulus like those seen through convection.

3) Fronts

Weather fronts, like a strong cold front, pushes air upward quite drastically and can produce strong cumulonimbus clouds that can lead to severe weather conditions. Different types of fronts lead to different lifting depending on the conditions. Dry lines* can also produce quite an amount of lifting that can lead to severe weather out in the Southwest.

*Dry lines are similar to a cold or warm front, but instead of being a boundary separating different types of temperatures, it is a boundary separating dry air from moist air. They occur frequently in the Southwest United States (Texas and Oklahoma) when moist air from the Gulf of Mexico meets dry desert air from the Southwest.

4) Topography

Lifting (or the movement of air upward) can also be caused by slopes and mountains. You may have seen how an area that is mostly flat can experience some clouds always around the one area that has some slope. Scientists call this process orographic lifting, meaning the movement of air influenced by mountains in that region. You will always see clouds and even rain developing on the side of the mountain facing the wind or the windward side and it will be very dry on the other side of the mountain, the leeward side.

But, what if you were able to stop or block the Sun from shining? What would happen to the clouds? A total solar eclipse is exactly that, a natural experiment where you stop or block the Sun from shining for a short amount of time. In general, during a solar eclipse, clouds produced by convection will be the most impacted. As the Moon blocks the light from the Sun, the convection process is reduced, resulting in cloud dissipation or the disappearance of clouds. Note that clouds produced due to frontal boundaries, like a strong cold front, will show little to no change.

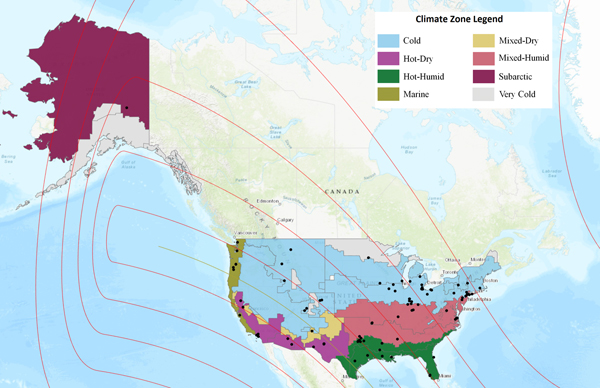

During the upcoming eclipse on April 8, 2024, scientists will be looking at cloud and temperature changes to see if there are any variations across the different climate regions the eclipse will impact. This research is being led by NASA scientist Ashlee Autore at NASA’s Langley Research Center in Hampton, Virginia. Autore has been looking at citizen scientist observations collected during the 2023 annular eclipse that occurred on October 14, 2023, and has been comparing them to nearby automated surface observing system (ASOS) observations. So far, she has been considering how cloud coverage changes during an eclipse. Using the U.S. climate zones, as defined by the International Energy Conservation Code, she divided citizen scientist and ASOS observations into their respective climate zones and noted some initial findings.

Of the citizen scientist and ASOS sites that reported no overall cloud change between the beginning and end of the eclipse, the majority saw overcast skies. Clear skies were also likely to remain unchanged during the eclipse. Warmer climate zones (hot-humid, hot-dry) reported the greatest percentage of clear skies that remained clear, while colder climate zones (cold, marine) reported the greatest percentage of overcast skies that remained overcast. The mixed-humid climate zone also reported a high percentage of continuous overcast skies. These cases of continuous clear skies and continuous overcast skies also were among the locations with the best citizen scientist/ASOS agreement, meaning that citizen scientists reported the same cloud conditions as the nearby ASOS sites. In hot-dry climate zones—such as Los Angeles, California, and Carlsbad, New Mexico—ASOS stations had good agreement with citizen scientists reporting continuous clear skies. In the cold climate zones—such as Toledo, Ohio, and Indianapolis, Indiana—ASOS stations had good agreement with citizen scientists reporting continuous overcast skies. Newark, New Jersey, and Philadelphia, Pennsylvania, which are in the mixed-humid climate zone, also had good agreement with citizen scientists reporting continuous overcast skies.

The upcoming “GLOBE Eclipse Challenge: Clouds and Our Solar-Powered Earth” asks people from around the world to volunteer and collect cloud observations throughout the day. Participants can collect cloud observations from March 15 to April 15, 2024, and make observations at different times of the day. For those experiencing any portion of the solar eclipse on Monday, April 8, 2024, we ask that you collect air temperature and cloud observations at least an hour before and an hour after maximum eclipse for your location. We will use all these observations to study how clouds change throughout the day and how they are impacted by changes in sunlight and temperature (such as by an eclipse). This year’s eclipse will give scientists more data points and will allow for further analysis.

Update on March 11, 2024: This image shows Spirit Lake, located in south-central Washington, on April 26, 2023. Congratulations to Ivan Kordač for being the first to correctly identify the lake. Special mention goes to David Sherrod, who pointed out the lake’s floating log raft and mentioned a recent debris flow in the region (which occurred several weeks after this image was acquired). Read more about the lake in our Image of the Day story.

Every month on Earth Matters, we offer a puzzling satellite image. The February 2024 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting.

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

Update on January 23, 2023: This image shows Tukpahlearik Creek in northwest Alaska. Congratulations to Luis Martinez for being the first to correctly identify the feature and its location. Special mention to Steve, who provided a reason for the creek’s unusual color. Read more about the image in our Image of the Day story, which describes several hypotheses for the creek’s orange hue.

Every month on Earth Matters, we offer a puzzling satellite image. The January 2024 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting.

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!

Update on January 9, 2024: This image shows terrain near the confluence of the Wisconsin and Mississippi rivers. Puzzler images are typically acquired by satellites, but this month we chose to feature a photograph taken by an astronaut on the International Space Station. Congratulations to Pierre-Luc Grenon for being the first to correctly identify both rivers. Read more about the image in our Image of the Day story.

Every month on Earth Matters, we offer a puzzling satellite image. The December 2023 puzzler is shown above. Your challenge is to use the comments section to tell us where it is, what we are looking at, and why it is interesting.

How to answer. You can use a few words or several paragraphs. You might simply tell us the location, or you can dig deeper and offer details about what satellite and instrument produced the image, what spectral bands were used to create it, or what is compelling about some obscure feature. If you think something is interesting or noteworthy, tell us about it.

The prize. We cannot offer prize money or a trip on the International Space Station, but we can promise you credit and glory. Well, maybe just credit. Within a week after a puzzler image appears on this blog, we will post an annotated and captioned version as our Image of the Day. After we post the answer, we will acknowledge the first person to correctly identify the image at the bottom of this blog post. We also may recognize readers who offer the most interesting tidbits of information. Please include your preferred name or alias with your comment. If you work for or attend an institution that you would like to recognize, please mention that as well.

Recent winners. If you have won the puzzler in the past few months, or if you work in geospatial imaging, please hold your answer for at least a day to give less experienced readers a chance.

Releasing comments. Savvy readers have solved some puzzlers after a few minutes. To give more people a chance, we may wait 24 to 48 hours before posting comments. Good luck!