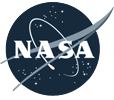

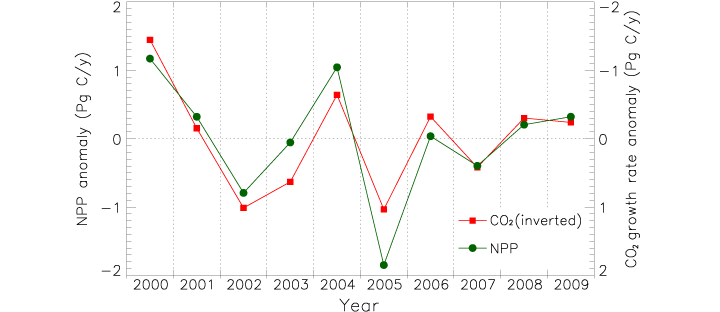

James Hrynyshyn over at Class M has an interesting post about a graph of net primary productivity (also, and perhaps more commonly, referred to as NPP—it’s a measure of the amount of carbon a plant takes from the atmosphere and uses to grow) anomaly (difference from normal) compared to the anomaly in the growth rate of carbon dioxide in the atmosphere. The graph is from the same paper as our recent image: Drought Slows Plant Growth, 2000–2009. The two variables are plotted together, but “carbon dioxide growth rate anomaly” (a somewhat complex concept in itself) is inverted. This gives the impression (unless you read the caption) that the growth rate of carbon dioxide in the atmosphere falls if net primary productivity falls, and rises if net primary productivity rises, when, in fact, the opposite is true.

I agree with Class M: if the first impression a graph gives is the opposite of what the data shows, and a close reading of the caption and annotations is required for understanding, the graph is destined to cause misunderstandings. Just for the record, this is what I would have done:

Reference: Zhao, M., Running, S.W. (2010). Drought-induced reduction in global terrestrial net primary production from 2000 through 2009. Science, 329(5994), 940–943.

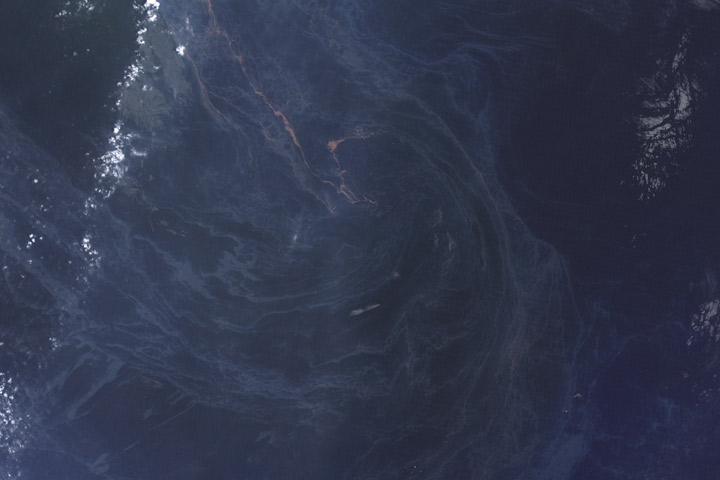

We sort through a lot of imagery at the Earth Observatory, and not all of it makes it onto the site. A picture might be cloudy, we might not be able to tell a good story about it, it’s not quite relevant, the quality might not be good enough, or—as in this case—we might find something after it’s no longer newsworthy. These three satellite images show oil slicks on the Gulf of Mexico after the Deepwater Horizon drilling rig explosion. All are from Landsat-5 in May and July. I found them earlier this month while looking for image ideas. (Click for large images.)

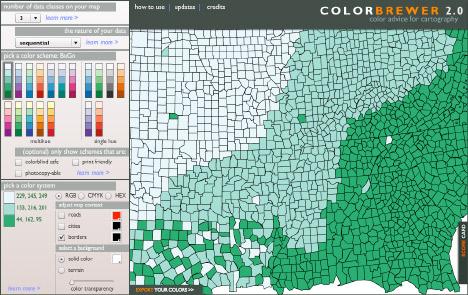

Whenever I invite someone to talk at our monthly “education and outreach” colloquia I seem to be out of town when the talk is scheduled. Sure enough, last Wednesday when Cynthia Brewer was here (at Goddard Space Flight Center, near Washington, DC) I was in Los Angeles.

Dr. Brewer is a geography professor at Penn State (as well as an author of several books on design and cartography: Designed Maps: A Sourcebook for GIS Users and Designing Better Maps: A Guide for GIS Users) specializing in research on effective map design, especially the use of color in maps. Since we make a lot of maps, ColorBrewer, her on-line tool for selecting color schemes, has been an invaluable tool. By all accounts she gave an excellent talk, I’m sorry I missed it.

In addition to data visualization, satellite imagery, and case studies, I intend to occasionally discuss general topics in design and typography. In this case, the typeface* Comic Sans.

Seen at the entrance to the Gibson Ampitheatre, Los Angeles, California:

A legal warning (giving up all rights to your likeness once you pass the sign) written in Comic Sans bold. The problem? Comic Sans is an extremely informal typeface, and legal text is extremely formal. Not a good combination. It’s also not a particularly readable typeface, since it was designed for short snippets of text in word balloons, not prose. (I’ve even had the thought that the sign was in a difficult to read font on purpose, but that’s not likely.) Comic Sans is famously infamous, it’s even been featured by the Wall Street Journal: Typeface Inspired by Comic Books Has Become a Font of Ill Will.

I’m not out to ban comic sans, but there are plenty of better typefaces available for public signage; from the London Underground’s Johnston, to the ubiquitous Helvetica, to the Standard Alphabets for Traffic Control Devices. (Actually, those might not be the best choice in this case, since they’re designed for legibility, not readability, but that’s a different discussion.)

Comic Sans would be good for something like lettering in a storyboard, or even its original intended use in comics. Unfortunately it would be a pretty generic comic, since Comic Sans ships with Microsoft Windows and Microsoft Office, so almost everyone with a computer has a copy. There’s plenty of good (and free) alternatives out there. At this point the most appropriate use of comic sans is probably ironic.

In case you’re wondering, we use 13-point Georgia for body text, 11-point Lucida Sans for captions & annotations, and 21 point Helvetica Neue Medium Condensed in the masthead. And how was the show? Awesome.

* Typefaces are colloquially referred to as fonts, but technically a font is a specific size of a specific font weight & style of a typeface. A typeface is all sizes a specific weight & style (bold, italic, etc.). A typeface family is the next step up in the hierarchy, including variant versions of a typeface, sometimes even as extreme as sans-serif and serif versions. I’m pedantic, so I usually use the precise terms.

The Elegant Figures blog will be a place for me (Robert Simmon, the Earth Observatory’s lead visualizer) to talk about some of the data visualization and information design we do on the Earth Observatory. I’m going to kick things off with the description of an image we made in May that showed ash from the Eyjafjallajökull Volcano using data from two satellites. The image benefits from a little science backstory, but feel free to skip down three paragraphs if you’re only interested in the infovis aspects.

For several days in April of 2010, air traffic in Europe was almost completely shut down by ash from Iceland’s Eyjafjallajökull volcano. The widespread flight cancellations weren’t caused by ash filling the skies all over Europe, but by uncertainty in the ash’s location. Without knowing exactly where the ash was, air traffic controllers couldn’t risk allowing passenger flights to embark.

Eyjafjallajökull erupting on May 18, 2010.

Currently, ash forecasts are based on computer models that predict the movement of volcanic ash based on its observed location and altitude, combined with wind speed and direction. In the case of Eyjafjallajökull, the initial location of the ash was known (the volcano’s summit), but not the altitude. This initial uncertainty grew as the ash blew towards Europe, dispersing and moving up and down in the atmosphere. The only way to constrain the computer model’s forecasts is to observe the ash—something that’s difficult to do from the ground (there is a network of instruments that measure aerosols (small atmospheric particles—volcanic ash is one type), AERONET, but they’re spread too sparsely to enable precise predictions), and it is difficult and dangerous to directly sample in the air. However: flying in space, far above the ash, some satellites can track ash, even as it spreads over long distances. Forecasters can use measurements from these satellites to improve predictions of ash movement, reducing the amount of airspace closed to flights.

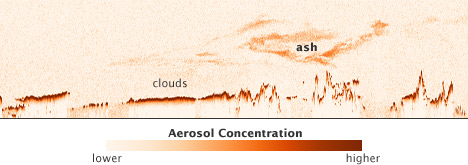

The CALIPSO (the acronym is a bit more memorable & succinct than its formal name: the Cloud-Aerosol Lidar and Infrared Pathfinder Satellite Observation) satellite uses reflected light from a laser beam to measure cloud and aerosol particles in the atmosphere. Critically for ash forecasting, it measures both the location and altitude of particles. It can also see ash within clouds. There’s one catch: CALIPSO only measures aerosols in a line directly underneath the satellite.

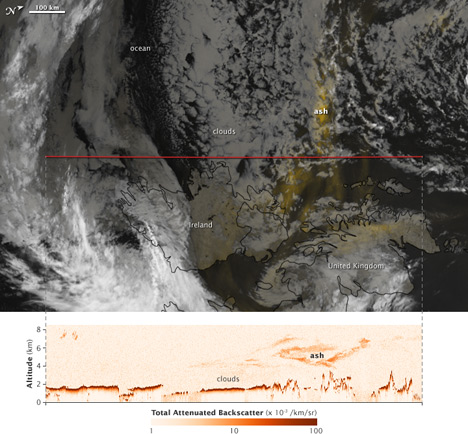

On the Earth Observatory, we wanted to show CALIPSO data to complement the large number of visible light, photo-like images we’d acquired, and to advertise the data, which is considered experimental. The nature of the data presented an interesting visualization challenge: it’s a two-dimensional curtain stretching from the Earth’s surface to the stratosphere, along the satellite ground track. Simply displaying the data by itself isn’t very informative:

Vertical profile of ash from Eyjafjallajökull on May 16, 2010.

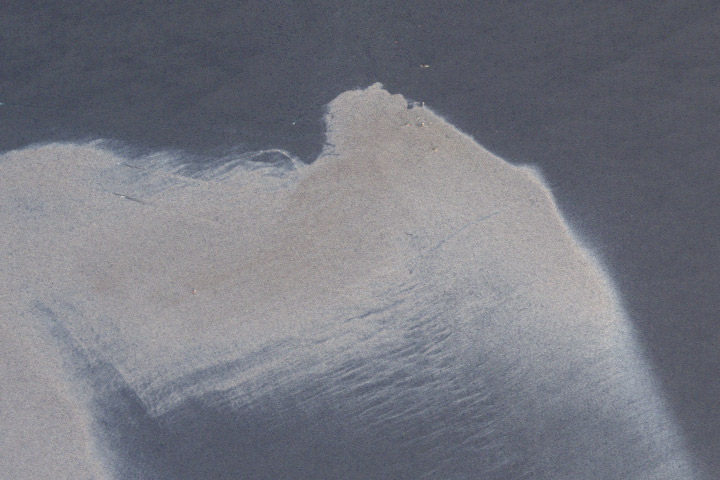

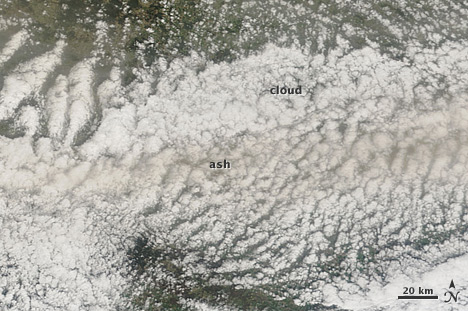

The data need context: a map of the area (just off the west coast of Ireland) & the location of the ash at the time CALIPSO passed overhead. Unfortunately, the data were acquired at night—so we couldn’t simply use a natural-color image. During the day ash is often pretty easy to spot:

Eyjafjallajökull ash mixed with clouds in the skies above Germany, April 16, 2010.

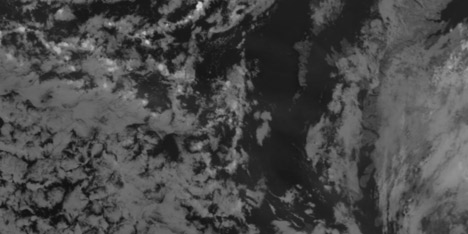

Our eyes can pick out the gray or brown plume, even if it’s mixed with clouds (at least if some of the ash is above the clouds). At night, however, satellites observe clouds with thermal infrared data, which is essentially a measure of temperature. Volcanic ash and clouds (at the same altitude) will almost always have the same temperature, and will look the same in thermal infrared imagery. In addition a thin ash plume may be completely invisible in thermal infrared wavelengths (data from MODIS, or more formally the Moderate resolution Imaging Spectroradiometer):

Thermal infrared image (inverted: cold clouds are white) of Eyjafjallajökull ash near Ireland and the U.K.

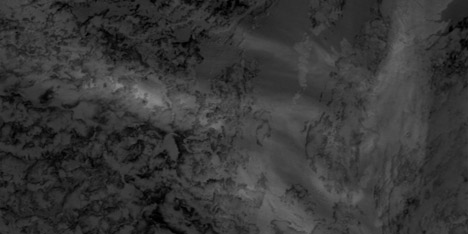

Ash, however, emits thermal infrared radiation slightly differently than water, so two images taken at different wavelengths (in this case 11µm and 12µm—both in the thermal infrared) will appear different from each other where there’s ash, but not where there are clouds. By subtracting the 11 and 12µm images from one another, you end up with an image that shows ash. Sortof:

Split window image of ash from Eyjafjallajökull.

It isn’t perfect, but the technique (called a split window) gives a qualitative picture of ash distribution.

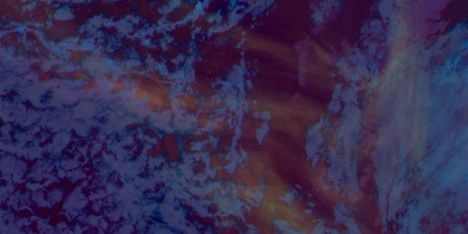

By itself a split-window image isn’t very informative: for the most part ash appears slightly lighter than the background, whether it is ocean, cloud, or land. We needed to come up with a trick to better distinguish ash from the background. Our first attempt was to combine the split window image (12µm minus 11µm) in the red channel with the original bands, 11µm and 12µm in the green and blue channels. Next we combined two different split windows (using different wavelengths) and an inverted thermal infrared channel (so clouds would at least be lighter than water). Neither worked:

Both were unattractive and (even worse) neither showed the ash particularly well. They’re understandable if you’ve spent a career analyzing satellite images, but not if you’re a novice. The image needed to be somewhat familiar, and the ash needed to clearly stand out from the background. I ended up using an image compositing technique. With Photoshop (this would work in any good photo-editing program) I combined the split window image (which showed the ash) with an inverted copy of one of the thermal infrared channels using a layer mask. With a layer mask, bright areas in a grayscale image will be opaque, and dark areas will be transparent. By assigning the layer mask to a solid yellow image layer, the ash appeared as yellow areas on a background similar to the satellite images shown on every TV weather forecast:

Final image combining the ash with thermal infrared data.

The ash stands out from the background because the eye is actually highlighting the yellow areas of the image before the image even reaches the conscious brain. This is known as pre-attentive processing which I learned about in Colin Ware’s book Information Visualization: Perception for Design.

Here’s the final image, combined with the CALIPSO data showing the vertical profile of the ash:

CALIPSO ash profile and MODIS split window